KEY COMPETENCE

key competence

safety-critical systems

Independently from the industrial domain – automotive, aerospace, medical, etc. –, most of the functions performed by embedded systems depend on high-performance, complex computation and communication architectures leveraging the latest technologies in chip design and networking.

In this context, we address the ever-renewed challenge of exploiting those technologies efficiently, i.e., maximizing safety and the use of the available resources while minimizing the development effort.

To accomplish this goal, our competence center carries out research around three main axes:

- real-time, to ensure that services will be delivered in a predictable and deterministic time;

- hardware/software co-design, concurrency, and distribution to ensure that the processing capabilities of the hardware will be exploited efficiently;

- dependability, to ensure that the system will behave as intended, with the appropriate level of reliability and availability in the presence of physical or intentional faults.

For each of these axes, we propose our expertise on the various elements involved in computing architecture, including the processing units (SoCs, FPGAs, GPUs, neural network accelerators…), the real-time operating systems and hypervisors, the communication networks, and the tools/technologies used to build an executable code out of a model (a C program, a machine learning model).

R&T fields

REAL-TIME COMPUTING

Here, we address a dual objective. On the one hand, we must provide computing platforms that meet the needs of new computationally-intensive functions providing high value-added services with more autonomy. On the other hand, we must master the complexity inherent in these platforms to provide reliable services as well as the required justifications to the certification authorities.

A fundamental activity of the development of most embedded systems is demonstrating compliance with timing constraints in all situations. However, with modern chips designed to deliver very high performances most of the time, this activity has become a real issue. To tackle it, we have been exploring methods and tools to ensure compliance with timing properties for several years. Our research addresses the multiple dimension of the problem: from the design of timing deterministic processor architectures to the analysis of commercial processors for timing interferences and worst-case execution times, to the use of timing-deterministic computation models.

DEPENDABLE COMPUTING ARCHITECTURES

The emergence of very large-scale, small size, and low-cost satellite constellations has raised the opportunity of using high-performance “Off-The-Shelf Components” (COTS). These are not specifically designed for the harsh space environment involving, for instance, high levels of radiation. In this context, we propose components and architectures to implement the Fault Detection Isolation and Recovery (FDIR) strategies to tolerate the effects of those radiations (SEUs, MBUs, etc.). This namely includes the deployment of the design of fault-tolerant IP architectures on an FPGA. In addition, we also develop the modeling and evaluation means to estimate the availability of the resulting architecture to perform, for example, availability/cost trade-off analysis.

TIME PREDICTABLE COMMUNICATIONS

An embedded system generally comprises a plurality of sensors, actuators, and processing capabilities interconnected via one or more communication networks, whose temporal characteristics are essential elements in the dimensioning and the dependability assessment of the system. By covering a large range of use cases and performance objectives, the emerging Time Sensitive Network (TSN) set of standards replaces many existing, domain-specific, networking technologies. In this context, we support and accompany industrialists to understand the standard, define the subset of TSN suited to their needs, and evaluate its actual performance, reliability, and, more generally, capabilities. Our research activities also cover the configuration of TSN networks, the estimation of the upper bound of end-to-end latencies, and the analysis of the synchronization capabilities. This work is supported by an evaluation platform representative of actual target architectures and traffic generation means, allowing the evaluation of various TSN configurations. This research field is tightly coupled with those treating real-time computing and dependable computing architectures.

EMBARCABILITY AND VERIFICATION OF ML APPLICATIONS

Grounded on our research activities related to the hardware aspects of real-time computations, and leveraging the “AI for critical system” Competence Center, our research in this field focuses on helping industrialists to find appropriate and safe solutions for the deployment of machine learning algorithms (or “models”) on an embedded platform. This activity relies on our expertise in Machine Learning algorithms, deployment toolchains (e.g., TFlite, TVM, etc.), and hardware platforms. In addition, and beyond deployment issues, we also investigate the definition and formalization of the Verification and Validation activities of systems embedding machine-learning algorithms using Model-Based System Engineering techniques and Assurance Cases.

Our offer

- Support for the analysis of timing properties of software, hardware, and communication architectures

- Support for the optimal deployment of Machine Learning algorithms on processing core, GPUs, FPGAs, etc.

- Platform for the evaluation of Machine Learning deployment solutions for embedded systems

- Analysis of TSN communication standards, support for the configuration of TSN networks

- Platform for the evaluation of TSN

- Methodological guides

- Theses reports, scientific publications, conference presentations

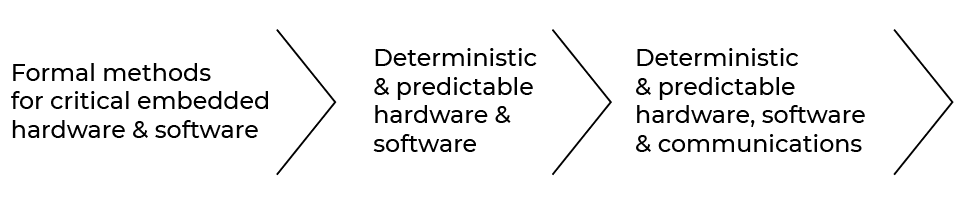

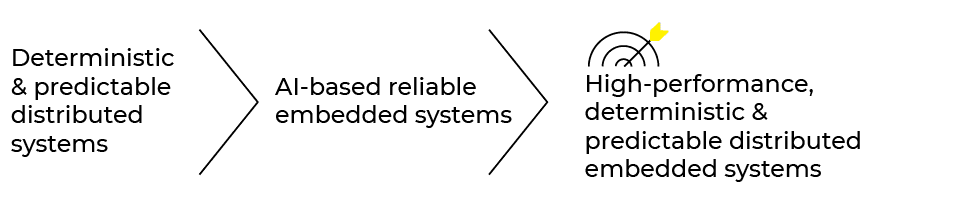

ROAD MAP

2020 > 2025

contact us

Contact our platform experts team